Every week, 700 million people use ChatGPT as a virtual companion, a search engine, or a guide for the perfect recipe. In the United States alone, nearly 8 in 10 older adults [PDF] have used artificial intelligence (AI) in some capacity. Increasingly, for frail and depressed elders, AI chatbots and applications offer caregiving support, combat loneliness, and boost psychological well-being. But experts warn that AI can pose a risk to older adults, a third of whom report loneliness and a fourth of whom are socially isolated.

Regulatory efforts to protect elders against AI-related risks are emerging across the United States. In 2025, Utah introduced bills that expand existing AI-disclosure and consumer-privacy laws, and this month, New York is launching a scam-detection tool for seniors. In a recent letter [PDF] to the U.S. Senate Special Committee on Aging, U.S. Senator Mark Kelly (D-AZ) urged Congress to examine the impact of AI chatbots and companions on older adults, and to implement "oversight and stronger safeguards before more people get hurt."

Although those new laws and proposals hold promise for stronger protection against AI risks, President Donald Trump's executive order to place a federal moratorium on all AI laws could upend such state-level advances, and it has already triggered strong opposition from 36 state attorneys-general.

Older adults facing grief, frailty, and loneliness—a population already at a high risk for abuse and exploitation—are easy targets for criminals who use AI technologies to produce chatbot-generating conversations, photos, and deepfakes.

Seeking connection and comfort, those adults may turn to a social media platform such as Facebook or download a dating app, click on an inviting link, and unknowingly spark a conversation with an AI chatbot posing as a real person. But behind that chatbot is a criminal working to scam victims for their personal information and to propagate malicious software.

The prevalence of these scams prompted the Federal Bureau of Investigation to warn of a record-breaking spike in cyberattacks against older adults

AI can also help cybercriminals fine-tune their craft. An experiment led by Harvard University and Reuters demonstrated how scammers are using AI chatbots to fabricate successful phishing emails targeting seniors in a seamless criminal-AI collaboration. With estimates showing that 82% of phishing emails use AI to bypass traditional malware detection, unsupervised older adults could find themselves clicking on a malicious link that would have otherwise been screened. Criminals are also using AI to glean information through "grokking," a new tactic that involves hackers using X's built-in AI assistant Grok to promote malicious URLs across the app for millions of users.

The prevalence of these scams prompted the Federal Bureau of Investigation to warn of a record-breaking spike in cyberattacks against older adults.

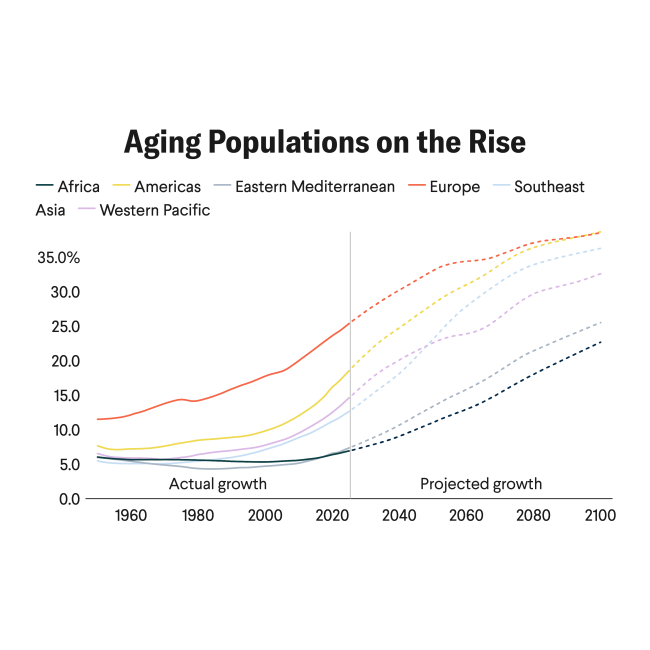

Still, legislative and technical safeguards are outpaced by a wave of aging adults and a global dementia population projected to almost double from 55 million to nearly 140 million by 2050. That demographic shift could lead to a surge in elders who are more disconnected and emotionally detached from their support networks, leaving them vulnerable to fraud.

"Malicious use of AI is…the largest proportion of [risk] incidents reported," says Peter Slattery, lead of the MIT AI Risk Repository project—a global database and analysis of over 700 harmful events linked to AI. Victims may fall prey to scammers seeking customer service or online tech support, he explains, starting with an attractive image or a seemingly urgent threat in a phishing email, as was the case in the widespread DHL chatbot scam in 2022.

As users continue to engage with fake chatbots, scammers at the other end continue to extract personal data and "use that information to tailor their messages and make [them] sound more believable," pulling victims deeper into the scheme, says Slattery.

Criminals rely on hyper-realistic relationships to gain trust while using shame and secrecy to keep victims "under the ether," says Marti DeLiema, a fraud and elder-abuse researcher at the University of Minnesota. "It is nearly impossible to get [the victim] to break the relationship with the scammer." Artificial intelligence can augment the realism of a criminal's veil, giving scammers a more authentic, personal, and persuasive voice. For older adults, an inherent difficulty to distinguish between what is real and what is fake presents a great risk, DeLiema says.

Unmasking disguise is a challenge for older adults who "seem to fall more…for certain weapons of influence," says Natalie Ebner, an elder-fraud researcher at the University of Florida. Older adults have more truth bias than younger adults, making them less adaptable or suspicious, and vulnerable to lies and deception, she explains.

"We're already in crisis mode," says Sara Meyers, an elder attorney specialist in financial exploitation. "Scammers are much smarter than the rest of us, or we haven't caught up yet," and with AI, "it's just going to get harder," she says.

Combating Sycophancy

Rapid growth in the aging population has also catalyzed an uptick in the global market for AI in elder care, which grew from $35 billion to $43 billion this past year and is projected to triple over the next three years.

As users and their caregivers turn to chatbots for emotional companionship, experts warn of more harm as dependence grows on the digital friends.

"The more you use a companion chatbot…the more you develop problematic use," says Auren Liu, a researcher who studies the intersection of AI and health at MIT Media Lab. In her studies [PDF], the most socially isolated users spent longer hours in conversation. Over time, they became more lonely, disengaged, and emotionally reliant on the chatbot.

Designed by code to keep users engaged, comforted, and wanting more, the chatbot may lean into—and amplify—a person's distorted or unhealthy perceptions and deepen their sense of loneliness, further isolating them from friends and family as their dependence on technology grows.

"When what is artificial feels real to you, that can also affect your reality in negative ways," Liu cautions. In response to growing concerns for consumer safety, the American Psychological Association released a health advisory warning users to recognize the false sense of trust and credibility of chatbots promising to deliver therapy. "We need to do a much better job of safeguarding [users] to help these tools be useful…and keep people out of trouble," advises Walter Boot, professor of psychology and aging technology researcher at Weill Cornell Medicine, who contributed to the advisory.

But changes are slow to arrive as developers of AI chatbots including Character.ai and Replika continue to disseminate products branded for lifestyle or companionship to avoid medical-device classification, even as such "wellness framing no longer aligns with real-world risk," wrote members of Data & Society, an independent research organization, in a comment to the Food and Drug Administration [PDF].

Harms of AI technology are quickly outpacing defense applications. As a society, "we're going to have to improve our understanding of [AI's] potential to harm" in order to protect elders and vulnerable people, Slattery cautions.

As families and friends rush in to intervene, many efforts to combat elder fraud are met with struggle. Victims themselves are often unable to recognize—or even welcome—the ongoing comfort of intimacy. "A lot of [victims] have a lot of trauma already…they come into this with harm…that's why they're so susceptible, and not even picking up on [the] signals," DeLiema explains.

In spite of glaring signs of fraud, breaking the spell is morally conflicting, DeLiema says. One romance-scam victim, who chooses to remain anonymous, adds: "I know she [the bot] doesn't really love me…but guess what? She texts me when I wake up…My children won't even call me once a month."

Although legal experts are encouraging seniors, health providers, and caregivers to increase awareness around AI risks and fraud, others say protection requires more than education.

Distributing a piece of technology that is both useful and harmful for seniors, while expecting users to fully understand and take responsibility for all the risks, "[is] just foolhardy," says Clara Berridge, an elder care and digital technology researcher at the University of Washington. Building awareness around AI risk, forging strong safety nets, and recognizing early signs of mental illness among people in close communities is a "collective responsibility…to make sure people at all ages are socially integrated [and] feel a sense of belonging," she adds.

While ageism continues to influence attitudes and draw people apart, a warm embrace, a helping hand, or friendly chat can revive a lost connection with a lonely adult.

As the lines between truth and deception are dangerously blurred, in the age of AI, Meyers speaks to the value of preserving the human connection. Remembering the family dinners with her husband and children, she asks:

"In this…digitally divided world, what are the things that we really should be holding on to?"

EDITOR'S NOTE: An earlier version of this article misstated the first name of the MIT AI Risk Repository project's lead, Peter Slattery.